The ROOT of all evil, 20 years later

I was contacted today by an undergrad student who has just completed a research project in particle physics, using the ROOT analysis framework, and had a … mixed experience. It sounds like they did a great job, really prepared to try new things to speed up and otherwise improve the processing, but something about the experience made them also happen upon my now rather ancient article about ROOT and to get in touch about it.

That article was written just after my PhD, now almost exactly 20 years ago — it’s only on writing this that I realise my first postdoc contract started in April 2005, and my belated graduation in March or April 2006, with the article written somewhere in-between. In it, I was reflecting on my PhD experiences with ROOT, as contrasted with what I had learned and was still learning about good software design and development. Every now and again I skim through it once more, and find that for a relative neophyte my judgements on good practice were surprisingly spot-on.

Maybe a little preachy, certainly purist/naive about workload… but then, I have come to think that the strongest distinguishing feature between a mediocre and an excellent (scientific) software developer, particularly when it comes to frameworks that others will use programmatically, is readiness to put in the effort to re-engineer something that technically works, because it could be better.

ROOT has, since 1991 and certainly up to the end of the v5 series,

largely been defined by aggregation of more half-assed, kitchen-sink

features (and assimilation of other libraries that had been more

useful standalone), and unwillingness to revisit suboptimal designs in

its core, most heavily used parts. ROOT6 started to overturn that,

with the many-year Cling project to replace the Cint C++ parser (this

was billed as a quick collaboration with LLVM in 2008, finally

released a first version in 2014), and since then has added features

like RDataFrame in response to the popularity of such structures as

introduced by Pandas and other Python tools. From version 7 — I’m

sure I’ve been seeing talks since at least 2019 — there’s finally a

revisiting of the histogram API, the plotting backends and rendering

quality, etc. So maybe the lesson of iterating and improving has

arrived with the new — I think third — generation of developers.

But is it in time?

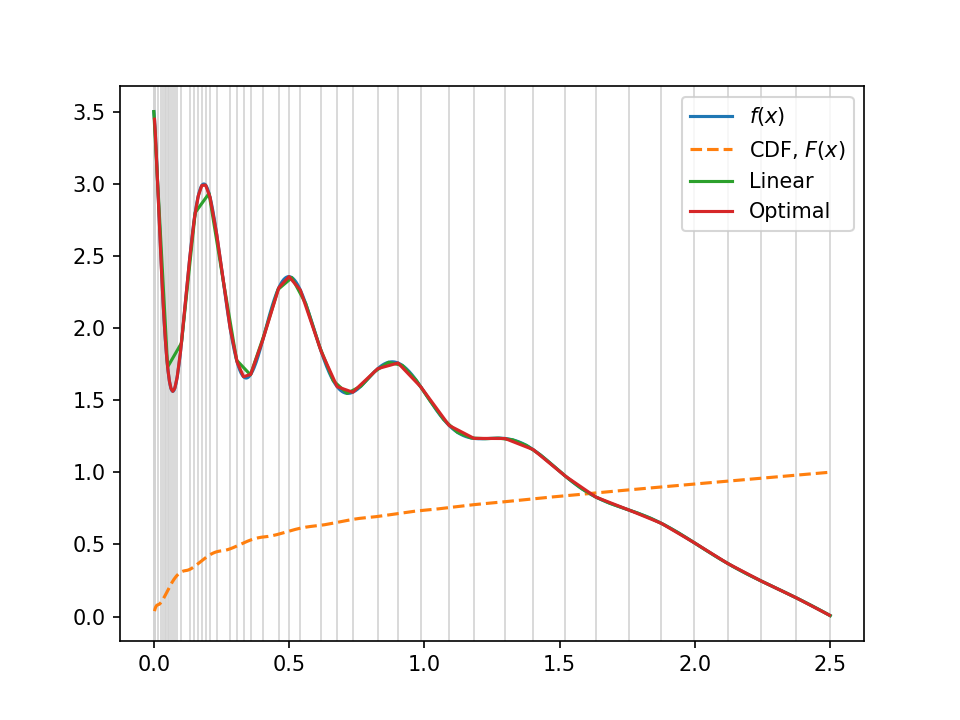

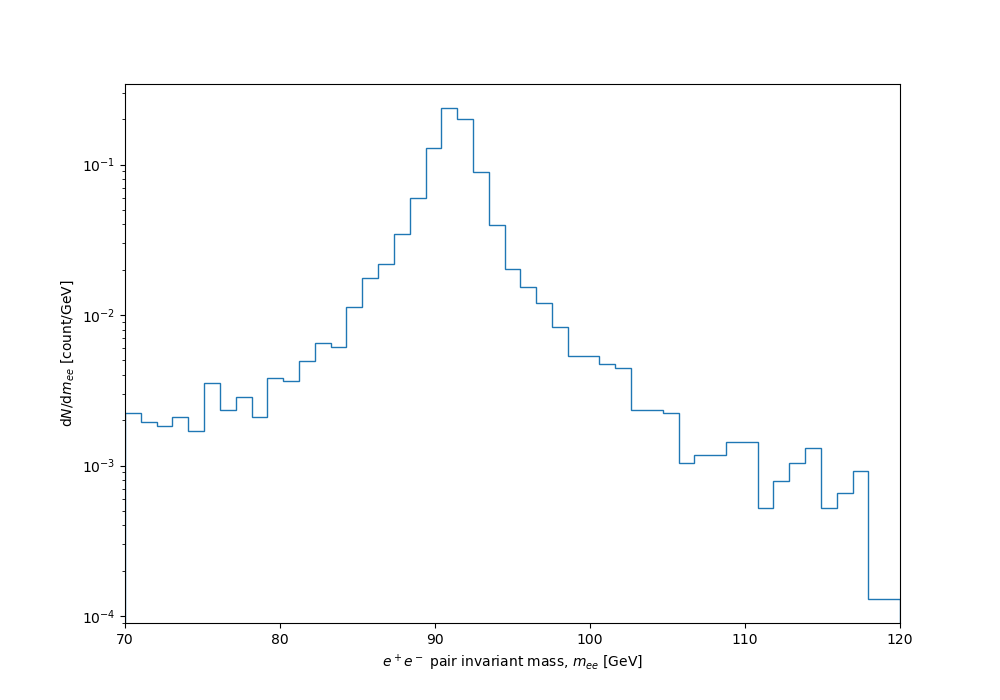

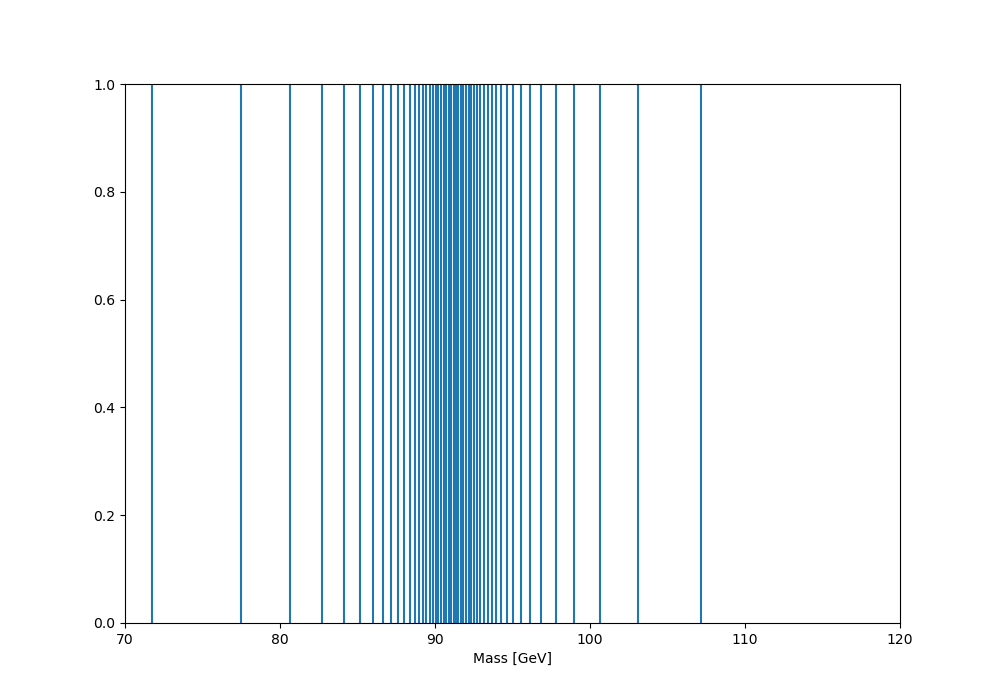

Back to the student enquiry, which asked me for my feelings about the tool now, many years later. Honestly, I minimise my contact with ROOT, and have ever since those first encounters. There have always been other ways, and lots of what its core features do are so uncomplicated that anyone can spin them up. That was true in the C++ days, and it’s super-true in the current, more Python-oriented world. I’m also a great believer in the importance of “write it yourself, once” as the best way to truly understand a concept — maybe I’m a bit slow, but I would never have truly grokked sampling, or likelihood profiling, or myriad other things, if I hadn’t made myself write code snippets to implement them, until the penny dropped. It’s a world apart from learning to write the config file for someone else’s implementation, and the sort of thing you can only take the time to do during PhD or postdoc “self-learning years”. Every now and again I reconnect, to e.g. write the YODA-to-ROOT bidirectional histogram converters, and depart vowing not to go there again for some time.

So I use it for file I/O only, in fact preferring third-party tools like uproot, and would never touch it for plotting these days. I think one of ROOT’s greatest damages done to the physics community is to conflate data-processing with presentation: in any sane workflow these are separate things. And in general, staging and filtering data to ease reprocessing — especially the very many iterations needed for final-plot and uncertainty studies — is an important skill that we don’t train or emphasise enough.

My general feeling about ROOT7 is that they’ve missed the boat. In the era when not-invented-here syndrome ruled high-energy physics, researchers and students largely weren’t aware of alternatives, and tolerated / Stockholm-Syndrome’d ROOT as the one way to do analysis. Some even took this out into industry, inflicting ROOT on insurance and banking quants as the guru-level tools by super-scientists in Geneva; to be fair, their sector tools were probably even worse. Everyone was also expected to write C++ then, for which ROOT’s tutorials and API encouraged myriad very poor software-engineering practices. But by virtue of inertia and navel-gazing, it remained the centre of most HEP experimentalists’ lives.

What I see now is an explosion of better-quality plotting and more performant, industry-standard analysis tools from outside particle physics, and this time researchers and students are aware of them and migrating. ROOT is going to remain the main storage and I/O format for HEP raw data, but in LHC collaborations I see it increasingly being ditched in the analysis phase for non-HEP Python-based toolchains. The rise of ML outside the ROOT/TMVA monolith — it is a monolith, despite old claims of modularity — has further encouraged that migration.

So the ROOT 6 and 7 developments of dataframes, new histogramming etc. are nice. Indeed, they look a lot like things I wrote in that article fresh out of my PhD! But this ain’t 2006, and people have a lot more alternatives now. At some point, CERN and the HEP community at large may decide that specialisation is better: ROOT as a focus for our peta/exabyte-scale I/O and filtering, but environments composed of interoperating, cohesively designed small tools as the better way to make a modern ecosystem. In the end, researchers vote with their feet for the tools that work best.